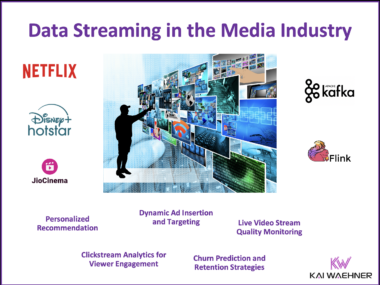

Data Streaming with Apache Kafka and Flink in the Media Industry: Disney+ Hotstar and JioCinema

The $8.5 billion merger of Disney+ Hotstar and Reliance’s JioCinema marks a transformative moment for India’s media industry, combining two of the most influential streaming platforms into a data streaming powerhouse. This blog explores how technologies like Apache Kafka and Flink power these platforms, enabling massive-scale content distribution, real-time analytics, and user engagement. With tools like MirrorMaker and Cluster Linking, the merger presents opportunities for seamless Kafka migrations, hybrid multi-cloud flexibility, and new innovations like multi-angle viewing and advanced personalization. The transparency of both platforms about their Kafka-based architectures highlights their technical leadership and the lessons they offer the data streaming community. The integration of their infrastructures sets the stage for redefining media streaming in India, offering exciting insights and benchmarks for organizations leveraging data streaming at scale.